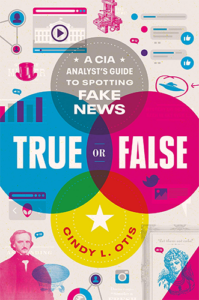

Cindy Otis (@cindyotis_) is a former CIA military analyst specializing in disinformation threat analysis and counter-messaging, and is the author of True or False: A CIA Analyst’s Guide to Spotting Fake News.

What We Discuss with Cindy Otis:

- What is fake news, and why does it spread six times faster than real news?

- How is fake news different from satire?

- How does misinformation differ from disinformation?

- The historical consequences of using fake news to influence a population.

-

How to spot fake news and inoculate ourselves (and our children) against its intended impact.

- And much more…

Like this show? Please leave us a review here — even one sentence helps! Consider including your Twitter handle so we can thank you personally!

To investigate the evolution of fake news and its weaponization in the age of social media, we’re joined by ex-CIA analyst Cindy Otis, author of True or False: A CIA Analyst’s Guide to Spotting Fake News. Here, we discuss what fake news is and why it’s so quick to take root, how misinformation differs from disinformation, what happens when satire is spread as fact, how people in strange places get rich inventing lies that destabilize democracy, and how we can inoculate ourselves — and our children — against the intended impact of fake news. And no, the irony of dishing over fake news with someone who used to be in the CIA wasn’t lost on either of us! Listen, learn, and enjoy!

Please Scroll Down for Featured Resources and Transcript!

Please note that some of the links on this page (books, movies, music, etc.) lead to affiliate programs for which The Jordan Harbinger Show receives compensation. It’s just one of the ways we keep the lights on around here. Thank you for your support!

Sign up for Six-Minute Networking — our free networking and relationship development mini course — at jordanharbinger.com/course!

This Episode Is Sponsored By:

Taped at The Venice West in L.A., Jordan Harbinger Live Presented By Hyundai features an interview with the best-selling author and host of The Daily Stoic podcast, Ryan Holiday. Catch it on LiveOne here!

- Pluralsight: Try Pluralsight at pluralsight.com/vision

- Hyundai: Find out more about the IONIQ 5 here

- HVMN: Go to HVMN.me/jordan for 20% off Ketone-IQ

- Thuma: Go to thuma.co/jordan and enter code JORDAN at checkout for a $25 credit

- IPVanish: Save 73% off a yearly subscription at ipvanish.com/jordan

Miss the conversation we had with Tristan Harris, a former Google design ethicist, the primary subject of the acclaimed Netflix documentary The Social Dilemma, co-founder of The Center for Humane Technology, and co-host of the podcast Your Undivided Attention? Catch up with episode 533: Tristan Harris | Reclaiming Our Future with Humane Technology here!

Thanks, Cindy Otis!

If you enjoyed this session with Cindy Otis, let her know by clicking on the link below and sending her a quick shout out at Twitter:

Click here to thank Cindy Otis at Twitter!

Click here to let Jordan know about your number one takeaway from this episode!

And if you want us to answer your questions on one of our upcoming weekly Feedback Friday episodes, drop us a line at friday@jordanharbinger.com.

Resources from This Episode:

- True or False: A CIA Analyst’s Guide to Spotting Fake News by Cindy L. Otis | Amazon

- Cindy Otis | Website

- Cindy Otis | Twitter

- Cindy Otis | Instagram

- Cindy Otis | Medium

- Cindy L. Otis | Goodreads

- The Age-Old Problem of “Fake News” | Smithsonian Magazine

- QAnon is a Nazi Cult, Rebranded | Just Security

- Misinformation vs. Disinformation: Here’s How to Tell the Difference | Reader’s Digest

- ‘I Was a Macedonian Fake News Writer’ | BBC Future

- Were the Jack the Ripper Letters Fabricated by Journalists? | Smithsonian Magazine

- Media Bias Chart | Ad Fontes Media

- China Uses YouTube Influencers to Spread Propaganda | The New York Times

- Laowhy86 | How the Chinese Social Credit Score System Works Part One | Jordan Harbinger

- Laowhy86 | How the Chinese Social Credit Score System Works Part Two | Jordan Harbinger

- “Fake News” May Have Limited Effects beyond Increasing Beliefs in False Claims | the Harvard Kennedy School Misinformation Review

- Facts and Myths about Misperceptions | Journal of Economic Perspectives

- Satire and Parody | The Arthur W. Page Center

- America’s Finest News Source | The Onion

- Fake News You Can Trust | Babylon Bee

- Punk News Comin’ Your Way | The Hard Times

- 30 Funny Responses by Gullible People Who Believed These Articles from the Onion Were Real | Bored Panda

- Mick West | How to Debunk Conspiracy Theories | Jordan Harbinger

- Education for Death: The Making of the Nazi (Walt Disney, 1943) | Internet Archive

- The Psychological Tricks Used to Help Win World War II | BBC Culture

- Propaganda and Reality: Hittites vs. Pharaoh Ramesses | Judith Starkston

- Russia-Ukraine Disinformation Tracking Center | NewsGuard

- Clint Watts | Surviving in a World of Fake News | Jordan Harbinger

- Electoral Systems in the United States | FairVote

- How China Is Interfering in Taiwan’s Election | Council on Foreign Relations

- Worldwide Propaganda Network Built by the CIA | The New York Times

- Troll Farms: Not the Stuff of Fairy Tales | News Literacy Project

- Can Americans Tell Factual From Opinion Statements in the News? | Pew Research Center

- Inventing Conflicts of Interest: A History of Tobacco Industry Tactics | American Journal of Public Health

- Which Experts Should You Listen to during the Pandemic? | Scientific American Blog Network

- ZDoggMD | Debunking Plandemic COVID-19 Pseudoscience | Jordan Harbinger

- Burned to Death Because of a Rumour on WhatsApp | BBC News

- Lynchings Fueled by Rumor Hit Anti-trafficking Drives in India | Reuters

- 2022 Buffalo Shooting | Wikipedia

- Dorothy Martin, the Christmas Eve Alien Prophecy, and the Psychology of Belief | The Atlantic

- Five Everyday Examples of Cognitive Dissonance | Healthline

- Confirmation Bias and the Power of Disconfirming Evidence | Farnam Street

- The Fake News of Orson Welles: The War of the Worlds at 80 | The National Endowment for the Humanities

- Jason DeFillippo | Website

- Betteridge’s Law of Headlines | Wikipedia

- Operation InfeKtion: How Russia Perfected the Art of War | NYT Opinion

- Jack Barsky | Deep Undercover with a KGB Spy in America Part One | Jordan Harbinger

- Jack Barsky | Deep Undercover with a KGB Spy in America Part Two | Jordan Harbinger

- The Definitive Fact-Checking Site and Reference Source for Urban Legends, Folklore, Myths, Rumors, and Misinformation | Snopes

- Stand Up for the Facts | PolitiFact

- Hoax-Slayer | Twitter

- Wayback Machine | Internet Archive

- The News Literacy Project | Checkology

- Tristan Harris | Reclaiming Our Future with Humane Technology | Jordan Harbinger

- Renee DiResta | Dismantling the Disinformation Machine | Jordan Harbinger

715: Cindy Otis | Spotting Fake News Like a CIA Analyst

[00:00:00] Jordan Harbinger: Shout out to Pluralsight, friends of ours and yours, leading technology teams around the world trust Pluralsight to bring skill development and engineering insights to the forefront of the developer experience, increasing productivity, efficiency, satisfaction, and retention.

[00:00:13] Coming up next on The Jordan Harbinger Show.

[00:00:16] Cindy Otis: The most successful conspiracy theories, disinformation campaigns from foreign governments, that sort of thing, those narratives were based on riling up people's emotions. When we're overpowered by things like anger or fear or sadness, depression, that sort of thing, we're not as willing to use critical literacy skills to, you know, look at where information is coming from. We're more likely to share.

[00:00:44] Jordan Harbinger: Welcome to the show. I'm Jordan Harbinger. On The Jordan Harbinger Show, we decode the stories, secrets, and skills of the world's most fascinating people. We have in-depth conversations with scientists and entrepreneurs, spies and psychologists, even the occasional organized crime figure, investigative journalist, former Jihadi, or tech mogul. And each episode turns our guest's wisdom into practical advice that you can use to build a deeper understanding of how the world works and become a better thinker.

[00:01:10] If you're new to the show, or you want to tell your friends about it, I suggest our episode starter packs. And of course, please do tell your friends about it. The starter packs are collections of our favorite episodes, organized by topic. That'll help new listeners get a taste of everything that we do here on the show — topics like persuasion and influence, disinformation and cyber warfare, technology and futurism, crime and cults, and more. Just visit jordanharbinger.com/start or search for us in your Spotify app to get started.

[00:01:38] Today, a lot of things we think we know are false. Let them eat cake — one of the most famous quotes, also fake news, but what is fake news, and what is fake news versus satire? What is misinformation versus disinformation? On this episode, we'll explore some of the history of misinformation and disinformation campaigns. We'll deconstruct how they work and give you the tools you need to disarm them and their influence on you and your loved ones. This is a great conversation if you're interested in how we are manipulated by governments, special interests, and foreign powers. And I'll have a lot more to say about all of this in the show close. So stay tuned for that.

[00:02:16] Now, here we go with Cindy Otis.

[00:02:22] So what is fake news? It's not just something Donald Trump says when he disagrees with you because that's actually where I heard the term first. You know, say what you will about Donald Trump, it was a genius sort of chess move on his part to say, "Hey, everything that is negative is fake news," because it kind of worked, but maybe that's a different show.

[00:02:41] Cindy Otis: Yeah. I mean the term existed long before Donald Trump started using it, it existed in other languages. It's essentially the idea, the actual definition of it, not the way it was co-opted and changed in the way you said, the actual idea is that it's false information that's intentionally spread and made to look like news. That has certainly changed in the contemporary usage of the term to be exactly as you said, but also sort of like any criticism or any opinion that a person doesn't agree with. That's the way the term has been used most recently.

[00:03:10] Jordan Harbinger: So the definition of fake news, essentially — and I remember hearing about this when I learned about World War II and the Holocaust, because in German, the lying press or the Lügenpresse, that was the Hitler thing where it was like, hey, any media that says anything good about the people we're demonizing or is from the people we're demonizing, or is anti-war or says anything bad about the regime or the Third Reich, that's all fake. And it's designed to manipulate you, but everything we're saying is true. And if you argue with us, you're just on the wrong side, you're just wrong. You're you don't have a different opinion. You are also part of this manipulative cabal sound familiar, anyone that is trying to control the government/the rest of the world. And it sort of has dark intent, which is ironic, of course, coming from literal Nazis.

[00:03:57] So some news is bad and some misinforms you, right? But some news is deliberately false and misinforms you on purpose. Can you tell us kind of what the difference is here? I mean, I guess I sort of explained it, but analysts at the CIA have to be able to tell these things apart, I assume.

[00:04:14] Cindy Otis: Yeah, absolutely. That's one of the main jobs of an analyst is to comb through large amounts of information and intelligence that comes across them, whether it's classified or open source and be able to determine what of it is true and false, and then analyze it from there. Sort of going to your point of the way that the Lügenpresse was painted in Nazi Germany.

[00:04:34] There's actually a lot of parallels to what we see in other countries now where they're targeting actual legitimate press. So this is standards-based news. So it's newspapers, it's media outlets that have things like fact-checkers that have things like editors have various reporting processes, ways of measuring the credibility of a source, and that sort of thing. They attack the actual, authentic, legitimate press that has those standards as a way of then sort of cutting out. Truth and fact, and replacing it with their sort of own version.

[00:05:05] And so that's the main difference, right? So there's the standards-based news. And then there's the sort of fake news. And that could be anything from, you know, somebody with a political agenda, hyperpartisan sort of more opinion-based information that they claim as news, or it could be an actual government organization creating a company, you know, quote-unquote, "news site" or something like that saying, "We're news," and actually it's being run by a government, or it might be run by one of my favorite, which is a weird thing to say, but one of my favorite groups of fake news creators, which were teenagers in Southeastern Europe, just looking to make a buck from pay per click ads.

[00:05:42] Jordan Harbinger: When I learned that a lot of the fake news sites that just made up ridiculous things and then ran Facebook, when I learned they were from Macedonia, I'll say I laughed a little. It wasn't really funny because of the effects on the United States, but I've been to Macedonia and it's not exactly like this technologically advanced superpower, that's got malicious intent. It totally made sense that it was just kids that went, "You know, dumb people can be manipulated? Let's try this thing. Oh my god, we're printing money." And then just went from there.

[00:06:12] Cindy Otis: Yeah. So you had a government that was looking to train up and educate its younger population and create jobs locally because in Macedonia, you know, the average income is a couple of hundred bucks a month. So they were actually trying to increase the skills and training of their citizen. They got these teens educated on things like website creation, SEO, things like that. And then they didn't actually have the jobs to use those skills. So a lot of them ended up being sort of the biggest perpetrators of the fake news websites that we saw starting in 2015. And since then, you know, they originally started making like fan websites, like muscle car websites, like cute pet websites, clickbait sites, that kind of thing.

[00:06:52] And then they sort of had this aha moment of, "You know, we could actually make a lot more money if we turned to the US election and super hyperpartisan or fake content targeting the election." And they did, they made thousands of dollars. A lot of those kids came out of their actually very wealthy, got expensive cars, got nice girlfriends. Two of them went in and bought a bar together. Couple of them started digital marketing companies, that sort of thing.

[00:07:14] Jordan Harbinger: I'd love to hear how Jack the Ripper, you know, you mentioned the Jack the Ripper saga in the book is one of the first reported incidences of widespread fake news in the media.

[00:07:24] Cindy Otis: So Jack the Ripper is the first sort of case study that I talk about in my book. And one of the reasons that I chose it and all of the case studies really was because I was trying to show how events that sort of are widely thought of, or, you know, we think we understand, or at least they're widely known how they were actually influenced by fake news to even affect our understanding of those events today.

[00:07:47] So Jack the Ripper, this is 1888 London, Jack the Ripper was a notorious murderer who went around the lower more poverty-stricken areas of London that had a lot of immigrants and folks fleeing from different parts of Europe, and worked in the sort of industrial side of the economy and went around slaying women, women who had turned to prostitution came from sort of troubled family backgrounds and that sort of thing.

[00:08:15] The murders were really, really gruesome. I mean, just horrible, bloody affairs. And it started this massive uproar in London, understandably, but it was incredibly affected by rumors and also fake news reporting of the events. And so, you know, people would say, "Well, I saw this person, this shadowy figure, walking down the street." And, you know, there was so much interest in having something to cover about these stories that reporters at the time would write up those rumors and print them as if they were fact or evidence and that sort of thing.

[00:08:47] And the police at the time were just overwhelmed with reports and rumors, fake sightings, and things like that from historical coverage of the event. It really affected their ability to actually sort through and find the actual evidence, and ultimately tracked down the murderer. They never did track down the murderer. It turned into what they called the autumn of terror. These letters started showing up in the mail, written in red ink, claiming to be from Jack the Ripper. The first one was signed Jack the Ripper. That's how he got his name.

[00:09:17] It later came out through, you know, analysis of handwriting and stuff like that as these letters were coming in that at least some of the letters probably were written actually by a journalist looking to make headlines.

[00:09:28] Jordan Harbinger: Wow, the earliest known clickbait nonsense, fake news. So you wrote that we should always think about who wrote what and why. And fake news is usually written by people who have a motivation for doing so. Okay. Maybe that's a little obvious, but sometimes that motivation is just clicks though, right? Like we talked about Macedonia, they weren't necessarily trying to sway the election. They probably didn't even care about that at all. They just thought, "All right. If we get another couple of articles that get as many clicks as these last few, I'm going to buy a Ferrari." I mean, that's what I was thinking about at age 20 or however all those guys were.

[00:10:01] Cindy Otis: Yeah. And it's true. The reporters who first tracked these teens down were like, "Why are you doing this? Don't you care that you're helping to destroy democracy?" And they were like, "I mean, look at my lovely girlfriend, look at my fast car."

[00:10:12] Jordan Harbinger: Right.

[00:10:12] Cindy Otis: They didn't care. It wasn't their country. It didn't affect them, right? So, yeah. I mean, I think a couple of reasons why I think it's important to understand sort of the source of information, where is it coming from, it's getting increasingly difficult, I think, to get this country at a point of agreeing on what is true and what is false. And I'm increasingly thinking through my research and analysis that instead of debating, okay, what is valid, you know, political opinion, what is true? What is maybe hyperpartisan versus what is outright false? Where we can have the most impact is just understanding where our information is coming from, who is putting it out, and why. And then leave the citizen read to sort of make a judgment as to whether it's then acceptable to them or not.

[00:10:58] So, for example, if you are listening to a podcast from an American talking about their trip to China, how wonderful it was, how beautiful the country is, that sort of thing. Okay. That's really interesting. If you now know that that podcaster who's claiming that not only is the country beautiful, but there are no human rights abuses in China and China is the land of the free and it's open and fair, and all of that, was actually paid by the Chinese government. Does that matter to you? I think it should.

[00:11:26] Jordan Harbinger: Yeah.

[00:11:27] Cindy Otis: So a lot of my work focuses on not trying to make a judgment about a particular statement or claim being true or being false, but actually helping people understand how they can investigate where their information is coming from because it's not just showing up in their cues. It's not just by happenstance that is popping up in their social media feeds. It's not always organic content. It could be from a government. It could be from an organization. It could be from an individual. And so let's look to some tools and tips to help you figure out where is it coming from. And then you individual can make decisions about whether you trust it or not.

[00:12:02] Jordan Harbinger: That stuff is important. You give the example of a podcast, but there are tons of YouTubers. I did a show about this with Laowhy86, if people want to go back and check it out a few weeks ago or a few months. Where he actually had kind of smoking gun evidence that said, "Hey, the CCP is hiring agencies and they're trying to get American YouTubers to post these false COVID videos that say that it comes from America. It comes from the white-tailed deer. It comes from this and that, and the other thing." And they're just like, "Hey, we'll give you 1500 bucks if you post this video on your channel." I've gotten it. They've gotten it. And there are these people that were not so affectionately called the shills and they will take a trip to Xinjiang with a bunch of government minders. And they dance with the same old people and they eat the same food and they go to the same houses and they say, "There's no sign of concentration camps anywhere around the downtown part of a capital city." Like, gee, it's so dumb. And yet, what's that quote? About, if you lie about something enough times, people just start thinking that it's true. That seems to be the plan and it's widespread. And it's a great effective way. I mean, If you have a budget of a couple hundred thousand dollars, you could probably get your BS video out on hundreds of YouTube channels because most YouTubers don't make anything and they don't see the harm in it, or they don't care about the harm in it, most of the time.

[00:13:20] Cindy Otis: Yeah, absolutely. I think the influencer side of this is really fascinating. I think China has long used influencers as a way of promoting their content in foreign elections, in domestic situations as well, to influence international perception of again, their human rights record, and Russia has done the same. We saw it at the start of the war in Ukraine, a number of Russian influencers were all over TikTok, spreading all sorts of false information about who the aggressor was, what Russia was actually doing in the country, and that sort of thing. And TikTok had to clamp down on them.

[00:13:52] Jordan Harbinger: Look, they are very sharp when it comes to this kind of thing. RT, this is a long time ago and I mentioned this on the show as well. RT has tried to hire me in the past. And I was like, "How did you find me?" "Oh, I'm looking at the top podcast charts, finding people who have a preexisting audience, maybe people who aren't necessarily very pro-Russia and were dangling a couple of hundred grand a year in front of your face to see if we can change your opinion and therefore use your megaphone to blast a bunch of crap."

[00:14:17] And what was slick about, this was a lot of what they — and we didn't get too far into the process, because I already knew that RT in Russia today was Kremlin bullsh*t, but they wanted just a lot of normal stuff. It was almost like 80 percent, just do your show, talk about whatever you want. And then once or twice a quarter have this person on that is just a complete shill nonsense, pro-Kremlin, ignore all history and facts to the contrary kind of guest and then go back to doing whatever you want to do as long as it doesn't cross these certain lines. It's kind of the same thing with China. It's like, "Hey, do your travel log. Go anywhere you want, Mongolia, we don't care, Italy, but then post this video about how Xinjiang is just a totally cool place and all the Uyghurs are happy."

[00:15:01] It's smart to do that because if you turn influencers into Alex Jones, 95 percent of the audience just goes, "Nope," and gets out of there and just says, "This is a bunch of crap now," but if you sort of drip feed it, then you can start planting those seeds in. And if that drip hits a hundred of the 200 outlets, people see on YouTube or wherever, it starts to look credible.

[00:15:23] Cindy Otis: That's a perfect explanation. If you go too extreme, you're narrowing your audience significantly. You're really only messaging to a niche group. If you cover normal standard things, right? An earthquake in this country—

[00:15:35] Jordan Harbinger: Right.

[00:15:35] Cindy Otis: Actual issues related to health, fitness ideas, that kind of thing, and you pull in an audience that way you're sort of steadily feeding them. It's breadcrumbs, really you're walking them into more extreme content that they might end up accepting because they've trusted that outlet.

[00:15:51] Jordan Harbinger: Right. It's almost like the YouTube algorithm except sort of a microcosm in each outlet, right? Where it sort of drips you more and more extreme stuff if you watch for 18 hours straight, but if you can slow play it and you can get influencers that are trusted to slowly become more and more extreme in one direction or the other. Sometimes that is caused by money. Sometimes it's caused by mainlining propaganda. The thing is you can't really tell. When I look at some people that I used to think were really smart and sharp, I just want to put a couple of whiskeys in them and be like, "Okay, so are you freaking nuts now? Or are you getting paid by someone to post this crap? Because you look nuts now." You know, I'm not going to get the truth out of these guys most of the time.

[00:16:28] Cindy Otis: And it's hard to do. I think that's why when you're looking at what is the difference between like misinformation and disinformation, it's intention. And intention is really, really hard to judge when you're dealing with individual humans, right? We can guess the intention of a foreign government, right? That sees the United States as an adversary. The intention is pretty clear. Their goals might be different. It might be foreign policy-related, or it might be domestic suppression, that kind of thing. But intention of an individual is really, really hard to determine, which is again, why I go back to looking at the patterns of adversarial behavior, how do they get information out, what tactics do they use. How is this information showing up on my feed or your feed?

[00:17:09] Jordan Harbinger: I found it interesting. You mentioned in the book, Fake news is often not designed to change our opinions. It's actually often designed to make us more set in our views by telling us what we want to hear or what we already believe.

[00:17:20] And the reason that's counterintuitive is you look at fake news and you think, oh, they're just trying to persuade me of some belief, but really that's not the case, right? It's sort of hammering nails into an existing belief already with more fuel.

[00:17:32] Cindy Otis: Yeah. You know, using fake news, using disinformation is a long-term game. Research has shown and Brendan Nyhan, a professor at Dartmouth has done some great work on this, Jason Reifler, folks like that have done some great work to show, you know, when looking at, for example, 2016 and Russia's interference in our election, people's beliefs didn't change because they saw one meme, right? Or they saw one false claim from a Russian account. Their beliefs didn't go from, you know, "I'm a total progressive Democrat," on one side to now, "I've seen this meme and now I'm over here voting for Donald Trump." That's not how it worked, right? The content that they consumed was pretty closely aligned to what they already believed. And it just acted as evidence really for them, false evidence, by the way, but evidence for them that they were right. And so they sort of hunkered down and stuck into their little trench.

[00:18:21] Jordan Harbinger: Yeah. Basically, anything shared by crazy Uncle Frank where he captions it with, "Are you paying attention yet?" Or my favorite, "You can't make this stuff up?" Half the time or maybe more, actually just totally made up in order to get people who already think a certain way to pay more attention to the account or the website that's shared the nonsense in the first place. Because when I see that stuff and when most, I guess, media literate people see that they, "Go, eh, probably not. Yeah, that's probably a bunch of crap and I've never heard of the NewEnglandPatriot.co newspaper or whatever sort of nonsense supposed to be looking very patriotic website has the news that no other site has, by the way. And it just reinforces crazy uncle Frank's belief that the Democrats and Republicans are both members, they're all members of the Illuminati. And it's all a big show and they're not really any opposition and it never has been. And it's just a bunch of crap and it's tough to convince people of new things, but it's so much easier to just get the people who are already convinced of something to share something wildly when you give them a little bit of evidence real or not for a preexisting belief.

[00:19:29] Cindy Otis: Yeah, absolutely. I mean, if their inclination is already to distrust, maybe a government agency, maybe a political candidate or a news source or something like that, their inclination is already to distrust that and you supply them with, quote-unquote, "evidence," false evidence that they're right. They're like, "Aha. I knew I was right." And it just solidifies that view for them.

[00:19:52] Jordan Harbinger: You're listening to The Jordan Harbinger Show with our guest Cindy Otis. We'll be right back.

[00:19:57] This episode is sponsored in part by HVMN. That's H-V-M-N. These are drinkable ketones. Yeah, look, I understand you don't even know what that means. I was skeptical at first, but I can definitely tell something is different during workouts. Also, I'm not as hungry later in the day, I'm in a better mood. Ketones give you focus for work that's different/better than coffee. Whenever anybody says, it's brain fuel, I'm like, okay, you're probably just full of crap, but look, even Lance Armstrong uses it. So, you know, it works, but it's not a banned substance or anything weird like that. It's just something your body naturally makes. Just not in the same quantity. I even thought it was the coffee giving me a boost initially because you know, coffee does that. But I tested it anecdotally, of course. And it really is this stuff that's making me feel less, I don't know, angsty, more focused. I'm not sure what to make of it other than they are onto something. So far, the stuff definitely works. I'm really in the zone when I work out, especially it's been a dozen times, I've tried it so far. Not a coincidence, really liking it. Besides, it tastes horrible, like vile. So you know, it works. I mean, if the ketones don't wake you up in the morning, the taste will wake you up in the morning. They've also got a government contract, Special Forces guys are using this stuff and a lot of extreme athletes use it as well. For 20 percent off your order of Ketone-IQ, go to HVMN.com promo code JORDAN. Again that's H-V-M-N.com promo code JORDAN for 20 percent off Ketone-IQ.

[00:21:17] This episode is also sponsored by Thuma. True story, we bought a cheap bed frame online and almost got in a fight/divorce, putting it together because the pieces barely fit and it was so annoying. After using it for a couple of years, one day, I was sleeping and the slots the mattress sat on just literally collapsed. I was basically on the floor and that's when we decided not to skimp on a bed frame. I recommend you avoid that problem as well. We upgraded to The Bed by Thuma, which is sturdy, solid, and handcrafted from eco-friendly, high-quality upcycled wood. We got the walnut color now, but they have espresso, which actually looks really slick. Thuma features, modern minimalist design with Japanese joinery. So no screws, none of that, no tools to assemble. Jen did it completely by herself, which took about five minutes, and avoided possibly multiple couples therapy sessions. The bed will last you for life, literally. It's also backed with a lifetime warranty. So they're putting their money where their mouth is. Along with The Bed, we also got The Nightstand and Thuma also has The Side Table and The Tray. You can guess what those are. Plus we love that Thuma plants a tree for every bed and nightstand sold.

[00:22:19] Jen Harbinger: Create that feeling of checking into your favorite boutique hotel suite, but at home with The Bed by Thuma. And now go to thuma.co/jordan and use the code JORDAN to receive a $25 credit toward your purchase of The Bed. Plus free shipping in the continental US. That's T-H-U-M-A.co/jordan and enter JORDAN at checkout for a $25 credit, thuma.co/jordan and enter code JORDAN.

[00:22:45] Jordan Harbinger: If you're wondering how I managed to book all these amazing folks for the show, it is because of my network. I know networking sounds gross. It sounds schmoozy and icky. I don't like that stuff either. It's a dirty word. Let's undo that. I'm teaching you how to build your network for free in a non-schmoozy gross way over at jordanharbinger.com/course. The course is about improving your networking and your connection skills, but also about inspiring other people to develop a personal and professional relationship with you, not just transactional, business-card-in-your-face nonsense. This will make you a better networker and a better connector, but most importantly, it'll make you a better thinker. That's jordanharbinger.com/course. And by the way, most of the guests that you hear on the show already subscribed and contribute to the course. So come join us and you'll be in smart company where you belong.

[00:23:30] Now back to Cindy Otis.

[00:23:35] Let's talk about fake news versus satire. We have The Onion, amazing onion, the Babylon Bee, which is kind of the onion for, I suppose, another political side of the aisle. A lot of people don't get it and they share those articles as real, which is something, of course, nobody at The Onion or otherwise ever thought would happen 20-plus years ago when they started it. It was so obviously satire, but see also crazy Uncle Frank, who wasn't sharp enough to distinguish satire from real news and constantly shares satire as real news and goes, "Ah, they are forcing preteens to have abortions in California. They're handing out guns in Alabama to toddlers." I mean, it's just the dumbest thing you can think of. It seems like satire has almost started to do us a disservice because we assume a certain level of media literacy, but it's wrong. It's not there.

[00:24:25] Cindy Otis: Yeah. I mean, part of it is social media moves so quickly and folks are just sort of scanning. Like think about the amount of time that you, or the average person is probably a better example, spends on each piece of content that shows up in their feed. Like we're just constantly scrolling, right? We're reading snippets, we're reading headlines and that sort of thing. And then we're moving on to the next. And so that's where satire ends up being an issue is because we see the headline. A lot of folks already feel like we're living in a dystopia. So add in a little dystopian, but satirical headline and you got people sharing.

[00:24:59] And then the other thing too is, you know, some of these, most of the sites actually have some sort of disclaimer, right? You just clicked on it. You would see this website is meant to be satire or this website is meant to troll this ideological camp. Anyone who falls for it, ha-ha, shame on them, like that kind of disclaimer. Even when that's pointed out to folks, there's the inclination to — again, because we don't like being wrong, right? To sort of dig in our heels and be like, "Well, but it's so close to reality. It could be true, right? So I'm going to keep promoting or I'm going to keep following it or sharing it." That's one of the issues that we run into and how do we get people more interested in figuring out where their information comes from and not falling for these very obvious things is they have to want to, right? They have to be willing to be wrong on occasion.

[00:25:46] Jordan Harbinger: That's true. And that is a tough one, especially because I think a lot of people who share a lot of fake news, they're often very wrong and they've been wrong about a lot of stuff, their whole lives, because some of the least educated people, and correct me if I'm wrong, some of the least educated people in my circle are the ones that spread the most nonsense online. Those are the people that are always jumping from one multi-level marketing scam to the next thing. They're always spreading the propaganda of the far-left or the far-right, or whatever sort of political aisle they're on to the point where people go, "Oh my gosh, you don't really believe this, right? Come on."

[00:26:22] Those are sort of the loudest voices in social media many times because they don't have anything else to do all day at their job or the job they don't have. It's almost this perverse sort of reverse funnel where like the dumbest people are the loudest. They share the most stuff. They also have the most time on their hands and they have the least amount of media literacy. And they're also the biggest slaves to their emotions. Like fake news is more effective when it plays on people's emotions, right? It gets them to overlook all these factual inaccuracies that they may have maybe would've noticed before.

[00:26:51] Cindy Otis: Emotion is so incredibly tied to this issue. The most successful conspiracy theories, disinformation campaigns from foreign governments, that sort of thing, those narratives were based on riling up people's emotions. When we're overpowered by things like anger or fear or sadness, depression, that sort of thing. We're not as willing to use critical literacy skills to, you know, look at where information is coming from. We're more likely to share when we feel like we're in constant crisis, we're more likely to share.

[00:27:20] That's why the pandemic was such, I mean, just a swirl of disinformation and conspiracy theories because people around the world felt like our time could be up. Our cities and towns and states are closing. There were a lot of unknowns. We didn't know what this virus was going to do. We didn't have a vaccine at the time and that sort of thing. And people, you know, felt like their lives were on the line. And for many folks, of course, that was true. That sort of state of being in crisis mode ended up generating just a huge amount of false information and conspiracy theories about the pandemic.

[00:27:54] Jordan Harbinger: Disinformation also has its place in conflict though. I mean, I remember in your book and also reading in history, Disney, helping the US military discuss patriotism and generate war support. There was like a cartoon clown Hitler. I don't know if that was Disney or something else during World War II. And that makes sense, but it can also blow up on our face because it creates this cycle of distrust.

[00:28:16] And the example you give in the book is Britain or the UK using fake news about German troops to scare. I think it was to scare China into entering the war. They said Germany boils the dead bodies of its troops to make soap. And then of course, when Britain is trying to report what's happening to the Jews and the Gypsies and the Holocaust where they were actually boiled to make soap, people were like, "Yeah, you're not going to get me twice."

[00:28:39] Cindy Otis: Yeah, absolutely. You know, using disinformation in conflict has been occurring since the beginning of time. One of the oldest examples I talk about in the book actually comes from ancient Egypt during a war. And so it's been a key campaign or a key tactic of military strategy from the beginning. But exactly, as you said, the concern with all of this, and I'm sure this comes as a surprise actually, it usually does coming from the former CIA person, but actually using disinformation against a foreign country can have massive consequences when you're trying to get them actually to believe your information instead, right?

[00:29:12] You've created an environment of mistrust among the citizenry. And so they don't feel like they can trust anything. So why would they trust you, especially if you're involved in the conflict? So it's definitely a double-edged sword. The biggest example we're seeing these days of how a military is using disinformation is during Russia's invasion of Ukraine, which is ongoing. They for weeks puddled disinformation ahead of the campaign saying they weren't planning on attacking even as their military was building up on the border. Oh, it's exercises, right? When they invaded, they pushed the idea that, "Oh, it's not actually invasion, it's a special operation, it's peacekeeping."

[00:29:47] And they've continued to sew massive threads of disinformation throughout the campaign. I know you've talked about that with other guests. But that's actually disinformation that they have been pushing, targeting Ukraine since the fall of the Soviet Union. It's all building on each other. And that's another interesting aspect of disinformation is how they build upon narratives, right? Again, going back to the idea of disinformation can be like breadcrumbs that sort of steadily moving people along a path.

[00:30:12] Jordan Harbinger: Especially the assertion that Ukraine is this sort of fascist Nazi government. It's just that they've been using that for decades, I think. Well, at least since the revolution, it's been sort of at a slow boil before that, but then when they got rid of the pro-Moscow — was his name Yanukovych? I always get these guys confused. Then they were like, "Oh, well, suddenly this is a Nazi fascist revolution in the country." It couldn't have anything to do with democracy or young people actually wanting a future. It has to be something, something fascist, which is if you know, Russian mindset at all, that's a trigger word and a half because their main enemy was, of course, the Nazis.

[00:30:47] That's like a key part of their history, just like the United States. And so it becomes even more — it's like using Islamic terrorism, except with even more historical roots to rile people up. And bad actors can take advantage of distrust in government by creating an alternative source of misinformation if your government is also constantly misinforming. And Russians are not stupid, right? They know their government. They've been lied to for, I guess, probably like a century or so, or more, actually way more just in the current iterations in the last century. So as long as anybody you know in Russia has been alive, they've been lied to at an industrial scale about pretty much everything, all the time.

[00:31:29] Cindy Otis: Yeah. And you know, for them too — one of the successes of a disinformation campaign can be okay, maybe a person doesn't change their mind, but instead they check out. They decide not to trust anything, right? I think we're seeing a little bit of that with the invasion of Ukraine is that they did the whole argument of, "Uh, Nazi, fascism, all that. We're trying to bring the country back," right? They also did things like, or are still doing things, like pedaling fake videos of what are actually actors and actresses on war movie sets getting their costumes and makeup together and saying, "Look, the Ukrainian government is—"

[00:32:05] Jordan Harbinger: Staging Bucha.

[00:32:06] Cindy Otis: "—paying actors."

[00:32:07] Jordan Harbinger: Right. Yeah.

[00:32:08] Cindy Otis: And that plays to the idea that's very popular in several Western countries, including the United States of crisis actors, paid protesters, false flag, that kind of thing. And a lot of those videos went viral on places like TikTok. Well, if you come out as a disinformation researcher, like I do, and say, "Hey, this video is actually from this movie set. They were filming it two years ago in Eastern Europe."

[00:32:31] Jordan Harbinger: Right.

[00:32:31] Cindy Otis: It's not actually true. You're informing people. Yes, there's also the risk and why it makes sort of combating disinformation, such as challenging subject. There's also the risk that by putting out that fact-check or that correction, then people will be like, "Oh gosh, well, you just can't trust anything. I can't trust anything on social media anymore. And so I'm not going to believe any video that comes across my feed about Ukraine." but actually Ukraine is perhaps one of the most online wars in contemporary history.

[00:32:57] Jordan Harbinger: For sure. Yeah.

[00:32:59] Cindy Otis: And so there's actually a lot of truth in the videos that are being shared by actually Ukrainians on the ground, for example, providing huge information and insight into what's actually happening in the war. But if you've got a population that has decided, then they can't trust anything and they're going to check out, they might not believe that either.

[00:33:17] Jordan Harbinger: Yeah. That is part of the danger. But also, of course, what you're saying is that's part of the goals of misinformation. Look, if I can convince you, that's great. But if all I do is depress you to the point where you just start rewatching Game of Thrones, instead of paying attention to the news cycle at all, even better.

[00:33:34] Same thing with politics, right? Well, the left does this and the right does that. So if you get people to go, "You know what? I'm just not even going to vote. All of it's crap anyway, and it's all controlled by the Illuminati. And if I'm wrong about the Illuminati, then who cares? My life's not going to change." Yeah, then you only get the people who are sort of on the extreme sides voting and you get this hollowed-out center, which is exactly what extremists and people who fund and promote extremism want. I don't want centrist voting if I'm trying to freaking stage a coup here legally or otherwise, right? I don't want centrists anywhere near this. I don't want rational thinkers anywhere near this. I want those people playing Xbox as much as possible. I don't want them to do anything.

[00:34:13] And that's one of the major reasons I do this show is I'm trying to show people that they can decipher the information. It's not necessarily that hard, have an open mind, but not so open that your brain falls out and don't check out because the people who, I mean not to be too Captain America about it, but free America needs you right now, more than ever before, because otherwise the only people voting is crazy Uncle Frank at Thanksgiving, and then the kooky people who think North Korea is actually a great place and we should become more like them. Those kooks on Reddit. Those people already have too much of a voice in my opinion. We want to counteract them and we want to make sure that they are not using our megaphone or getting us to check out. It gets a little depressing. And I understand that, but the solution is not to just block all of it out and say, "Screw it. We can't do anything about it."

[00:35:01] Cindy Otis: Well, we're similarly motivated. We're aligned on our goals and priorities for sure. This is why when I talk about, okay, how do we — because this is the number one question I get whenever I do like classroom presentations and things like that is, "Well, how do we fix this," right? To me, one, the checking out piece is not a solution. The solution also isn't sort of finger-pointing to one particular community or area and saying, "Well, this is just a social media platform issue, or this is just a government regulation issue."

[00:35:28] I wish it was so simple. I wish that we could actually identify, like, if we do this one thing, we'll fix it, right? But that's not actually realistic. It's a multifaceted sort of long-term game of bringing in regulation on things like data privacy, for example, having a more informed population on government education, how do elections actually work? Making sure that our population actually understands like this is the pathway your like absentee ballot takes, right? When you fill it out and you submit it. This is how we count votes. Because otherwise, you have — in the Taiwan national election in 2019, you had conspiracies about disappearing ink. That people were filling out these ballots of disappearing ink. They stuffed it in the machine and the machine automatically reacted with the ink in such a way that the ink disappeared and the ballot was invalidated. Does that sound familiar? Sharpiegate.

[00:36:18] And so if you have a population that doesn't actually know that that's not possible, right? That's not how it works. These are how voting machines work. This is how votes are counted. This is how absentee votes are counted, that sort of thing. It makes them more resilient when they then confront that information that says, "Hey, Sharpiegate." That is a long term. We need to invest in things like public education, community education, election practices. We need to increase education on digital media literacy skills. So you're not just sharing every meme that comes across your feed and then pursue all the other things too, right? Government regulation, working with tech platforms, that sort of thing.

[00:36:54] Jordan Harbinger: You sort of touched on this before. What do you think about the CIA being purveyors of fake news, especially in other countries? This is the part where I turn the tables in a controversial and semi-shocking way by putting the guest on the back foot. And then my YouTube team makes a video with the caption, "Confronting a CIA agent on fake news," and then the commenters get all angry because it's not much of a confrontation. I am curious what you think about this. Because of course, one of the main critiques of this episode is going to be, "Well, she works for the CIA. Could you have picked a less credible expert? You know, or maybe the most credible expert because she's doing it right now, talking about Russia and it's all fake and it's all just designed to get you to believe a certain thing. You rube, Jordan."

[00:37:35] Cindy Otis: Trust me, I get that. I get that too. You know, I actually do address that in the book. I stayed up front like, "Hey, we have this history of doing it ourselves at the agency. So I'm not trying to pull any punches. I'm not trying to ignore the history of the agency itself." I think again, people are always surprised when I'm the one sort of on a panel or doing a presentation where I'm the one who's pushing things like transparency, government transparency about its actions, transparency of social media platforms, about things like removing content or blocking accounts, transparency about where your information is coming from.

[00:38:07] And that's because I'm a firm believer in the idea that actually putting out false information does more harm on a society than good. If you're using disinformation as a foreign policy strategy and tactic, you run enormous harm and it's backfiring. And we saw that with the CIA throughout history, in its interference in places like Iran, all very like well-known public scholarly articles have been written about its involvement in places like Iran and Central America and things like that. And it was incredibly damaging to the CIA's reputation to public trust in government and that sort of thing to foreign trust in our policies and priorities abroad.

[00:38:44] And that is something that I think the United States government is going to have to deal with, frankly, for the rest of the country's existence is the damage that was robbed by spreading falsehoods in foreign countries. I think in places like where we're dealing with Russian disinformation, targeting Ukraine. Ukraine is in a position where they don't actually have to spread any falsehoods, right? The bravery of their soldiers, the damage that the Russians are doing, all of that is actually incredibly true. A government like that benefits in no way from spreading false information.

[00:39:15] Jordan Harbinger: To your point, so much fake news has been posted by fake-bought accounts on social media or disinformation farms, both at home and abroad over the past, even half-decade. That trust in media has been very eroded. I mean, one of the most common, most brain dead, but most common insults somebody will throw at me when I argue something about Ukraine or the Russian, they'll say, "Ah, this guy gets his news from CNN." And it's like, "Well, actually not really. It's clear that you get your news from RT and it's not even actual news." I'd like to think that I'm talking to experts in reading books and that's a little bit of a better source of information, of course, but the thing is the media has done a lot to erode trust in itself as well.

[00:39:55] You see outlets reporting nonsense constantly. I don't even just mean inconsequential BS. I mean, clickbait head headlines so that the paper doesn't go underwater and shows up more on Facebook. You also see left-leaning newspapers, never reporting anything about the Southern border, for example, and right-wing rags reporting made-up or dramatized stolen election nonsense as well. And the Internet troll farms and the foreign agents, yeah, they definitely helped, but man, it's almost like they didn't have to push too hard to get the snowball rolling down this hill.

[00:40:28] Cindy Otis: Yeah. It's definitely an issue that we confront on disinformation. I think when it became public that Russia interfered in our election, it created this whole new line of journalism about disinformation. You know, it's been a double-edged sword, frankly. I think we've run into the fact that the more you report about it, it can inform people. But, again, going back to what we were talking about earlier, it can destroy people's trust and what they see in front of them. Make them decide not to trust anything news, social media, like nothing, because they think, "Well, you know, everything that I disagree with must be a Russian bot," right?

[00:41:00] There's the harm of that, but there's also the harm of inflating, an adversaries capabilities. The reality is Russia does not control the world. They do not control every social media platform. They're not the puppeteer sort of lifting the puppet strings around the world. Not every account that is mean to you or says something that disagrees with your opinion is a Russian bot. And by saying it's a Russian bot or it's a Russian government, you're actually giving them much more credit than they deserve. And I think doing some damage.

[00:41:27] So there is something to the idea that the extensive coverage by media outlets of every single Instagram account that was Russian or suspected Russian or every single account pushing something conspiratorial that turned out to be Kremlin-linked or maybe Kremlin-linked because they once retweeted an RT article, I think that's been quite damaging and is pretty unhelpful.

[00:41:51] Jordan Harbinger: Yeah. We're in the so-called post-truth era and this makes me so sad, right? And it's one of the reasons I do the show because this whole post-truth idea is just cancer in our country and on the whole world, frankly. And the antidote is this educated populace that knows how to think that the mythical educated populace that knows how to think. That I'd like to think we're building brick by brick, but it's kind of like building a skyscraper with your bare hands.

[00:42:14] Let's talk about this for a second because it seems like you can't put this toothpaste back in the tube as much as I'm trying right now, right? As much as we're trying right now.

[00:42:23] Cindy Otis: My view on that changes sort of day to day.

[00:42:25] Jordan Harbinger: Yeah, yeah. I know me too. I hear you.

[00:42:27] Cindy Otis: To be honest and my level of optimism—

[00:42:30] Jordan Harbinger: Right.

[00:42:30] Cindy Otis: I think the reality is, you know, technology has created a lot of difficulties in addressing this issue that we frankly didn't have a couple of decades ago. And it's not going away. In fact, we're getting more platforms, we're getting sort of increased technology abilities to deploy content and that kind of thing. We're consuming content differently. Our brains are actually being rewired to a degree in how we look at content, the kinds of content we consume, what we end up trusting and synthesizing. And so we need adaptable strategies to getting messages out, things like digital media literacy.

[00:43:03] The issue is when you're talking to somebody, and I'm sure you've had conversations like this, where, you know, they say something that's incredibly false, right? And you show them a list of credible sources, things that have been vetted, scholarly articles maybe, and that sort of thing. And then, they actually come out with their sort of one journal article or there are a couple of websites or whatever, and they're low credibility but they look like legitimate sources to that person. So you've got a situation where you're having an argument, mustering your sources and information, and they're mustering their sources of information. And you know the two can't meet.

[00:43:42] Jordan Harbinger: This is The Jordan Harbinger Show with our guest Cindy Otis. We'll be right back.

[00:43:47] This episode is sponsored in part by Pluralsight. At Pluralsight, they believe everyone should have the opportunity to create progress through technology. Pluralsight is a tech workforce development company that provides the solutions that high-performing engineering teams need to tackle today's biggest challenges. Whether you need to build the skills, individuals and teams to tackle mission-critical projects, drive cloud transformation, or help software team shape reliable, scalable, and secure code, you can harness the collective power of hindsight, foresight, and insight with Pluralsight. Check them out today at pluralsight.com/vision.

[00:44:20] This episode is also sponsored by IPVanish. Do you browse online in incognito mode? Do you think you're being clever? You're protected from advertisers and prying eyes and hackers. Think again. Incognito mode, that's some amateurish right there. If you want to stay truly private and secure on the Internet, you got to get a VPN, folks. IPVanish is a VPN service that helps you safely browse the Internet. It encrypts your data. So private details like passwords, communications, browsing history, all that stuff's going to be completely shielded from falling into the wrong hands. An IPVanish makes you virtually invisible online. Very simple to use, you tap a button, you're instantly protected. You won't even know it's on. I use IPVanish everywhere, especially at coffee shops, airports, and hotels. You ever look in your networking settings at a hotel and you see that you can find everyone else's computer that's connected in every single room? Yeah, that's not good.

[00:45:06] Jen Harbinger: Stop sharing with the world everything you stream, everything you search for, and everything you buy. Take your privacy back today with a brand rated 4.6 out of five on Trustpilot. Get 70 percent off their yearly plan, which is basically nine months free, 30-day money-back guarantee. So go to ipvanish.com/jordan and use promo code JORDAN and claim your 70 percent savings. That's I-P-V-A-N-I-S-H.com/jordan.

[00:45:31] Jordan Harbinger: Hey, thank you so much for supporting the show. I love the fact that you listen. These conversations mean a lot to me. I love the fact that I get to do this for a living. Sometimes it's too good to be true. The sponsors are what keeps the lights on around here and what keeps us in, I don't know, fresh undies. You can find any deal, any discount code, all those complicated URLs, they're all on our deals page, jordanharbinger.com/deals. You can also search for any sponsor using the search box on the website as well. Please consider supporting those who support this show.

[00:46:00] Now for the rest of my conversation with Cindy Otis.

[00:46:06] It is disappointing. And again, it goes down, it comes down to media literacy. You know, I'll be saying something to somebody online or otherwise. And they'll say, "Well, I know this is true because I have people on the ground in Ukraine," and I'll say, "Oh really? Where do they live?" And then they live in Donbas and their Russians. And I'm like, "Okay, do you think maybe they have a slightly different opinion than somebody who's Ukrainian and lives in the west of the country?" And that their opinion is not a fact. And they just don't understand this.

[00:46:32] And you mentioned this Pew survey that shows the majority of Americans cannot discern facts from opinions, which is just horrifying any way you slice it. Can you discuss this a little bit? Especially the difference, because this is incredibly disappointing and damning.

[00:46:51] Cindy Otis: Yeah. So I think about the 1950s when the companies that made up big tobacco got together in the wake-ups and pretty damning reports studies that had come out saying, in fact, that smoking can lead to cancer and more reports were sort of coming out by the day. And they were very panicked about what this was going to do to their bottom line. They ended up coming together and deciding that they were going to hire this PR firm and created an organization called the Tobacco Industry Research Committee. It sounded incredibly authentic, legit made up of scientists and doctors and things like that.

[00:47:23] And those individuals were paid to essentially put out research showing that, "No tobacco doesn't cause cancer. It's fine." And what do you know? You know, the people who really enjoyed smoking believed them. They side with them. It wasn't well known at the time that the committee was funded by Big tobacco, that came out later and they were appropriately sued—

[00:47:46] Jordan Harbinger: Yeah.

[00:47:46] Cindy Otis: —for misrepresenting the information. But if people had actually confronted in their real lives like cancer diagnosis or a family or friend or loved one being diagnosed with lung cancer because of tobacco, they actually were confronted with the actual fact and the reality in their own lives. And that's where sort of being able to just discern between fact and opinion can be really tricky for folks because unless people experience something in their individual lives or can sort of see it with their eyes or touch it with their hands, they're more inclined to go with the opinion.

[00:48:20] Jordan Harbinger: This phenomenon is very interesting. People are more likely to label something as a fact if they agree with it and opinion if they do not. And that is just so dangerous. I really can't overstate it. Again, people are more likely to label something as a fact if they agree with it and an opinion if they don't. What does that mean for news and media consumption? I know that's a big question — I hate to say this, but it's almost like what's the point if people are just going to go, "Well, I don't like that. So that's up for debate and this I do like, so that's true." It's like, what the hell are we even doing?

[00:48:53] Cindy Otis: Yeah, I'm going to need a drink after this conversation, Jordan.

[00:48:56] Jordan Harbinger: I know. Yeah, me too.

[00:48:57] Cindy Otis: No, I think, you know, it's really, really incumbent on news organizations to be really clear on their pieces, on their websites, about what articles are actual reporting and what our opinion and editorial, and how it clearly marked. Like if it were up to me, every opinion page would have like a giant red banner.

[00:49:18] Jordan Harbinger: Right.

[00:49:18] Cindy Otis: I've been disappointed a number of times when news outlets that are otherwise quite trustworthy for the most part, put out a piece, an article that actually sounds quite a lot like recording, but they label it as opinion and you don't quite know how to judge.

[00:49:32] Jordan Harbinger: Sure.

[00:49:32] Cindy Otis: There's something to be said for, you know, well-thought-out analysis. I occasionally write the editorial here and there based off of years of work and analysis and that sort of thing. But it's important to understand that it comes from an analysis of sources and experiences versus it is an event that I have reported on as a journalist. I can't overstate the importance of news outlets, being very, very clear about what is fact and opinion.

[00:49:57] And then I also think the credibility of experts is really important as well. You have cable news sites that bring on political commentators who maybe have one area of expertise, but they're commenting on the entire world. And I think that can be quite damaging in terms of building trust with viewers and giving accurate information and analysis to viewers when you're not really bringing on actual experts of the topics you're covering.

[00:50:23] Jordan Harbinger: Well, we saw that with the vaccination stuff, and I don't want to go down that rabbit hole too far, but we saw, and we still see chiropractors and foot doctors and general practitioners saying this is safe or not safe because of this. And it's like, whoa, there you took one or two classes on virology and maybe vaccines 20 years ago in medical school. You're not an expert, but it's like, "Well, I'm a doctor." Okay, fine. I'm a lawyer. You should not have me as a former finance lawyer defend you in your murder trial. But I could probably convince people to hire me to do that because while I'm a lawyer and you know, I've been a lawyer for a long time, and look at me, I got a podcast and everything. I must know what I'm doing.

[00:51:08] That's very dangerous. And so questioning sources in a reasonable way has to happen. And yet there's plenty of, I can't remember who coined this term, but it's like intellectual trespassing, right? Where I say, I know all about this because I'm a lawyer. I know all about this because I'm a doctor. I know all about this because my dad used to work in X industry. And it's like, you don't know anything because you lived with somebody who was in that industry first of all. You don't know anything because you worked in that industry and you never touched this problem. You are deceiving yourself, but more importantly, you're deceiving others that you actually know what you're doing. You can't parse this information any better than somebody who's read, I don't know, 10 blog pieces on it. Or maybe you're at that same level, you think you're better at it because you should be in your own mind, but that is dangerous.

[00:51:57] Cindy Otis: It's also incumbent on the person being asked to talk about issues that are beyond their background and expertise.

[00:52:03] Jordan Harbinger: Right.

[00:52:03] Cindy Otis: I mean, the number of times in which I've been asked to comment on North Korea or something, because I worked in national security and I'm like, "I have no business talking about North Korea. I read the same articles that probably the general population does, but I have never spent any of my professional time on North Korea. I'm absolutely not going to talk about it."

[00:52:18] Jordan Harbinger: Tell me what's an Area 51, Cindy. Okay, I have not been there recently.

[00:52:23] Cindy Otis: Yeah, no but you know, you're asking people who perhaps really enjoy the limelight to forego it and perhaps pass it to somebody who's more qualified.

[00:52:32] Jordan Harbinger: Right.

[00:52:32] Cindy Otis: I don't know how much confidence I have in most books to decide, you know?

[00:52:36] Jordan Harbinger: I am with you. I mean, when we look at some of these people who say, "I'm not doing this for money, I'm doing this to alert the population." Sometimes when we dig a few layers, we see that they are indeed making quite a bit of money speaking on a subject because they're the contrarian that has letters after their name. That's willing to say something. Or they just have a narcissistic personality and they love the fact, hundreds of doctors are signing a letter that says they're full of crap and that media outlets are banning them, but they're the darling of all of these sort of weird intellectual, dark web want-to-be podcasts. And that they're getting a ton of follows on Twitter from kooks all over the world. I mean, they live for this stuff. And money be damned, they're living their best lives right now. And they don't really care that they're wrong.

[00:53:21] Cindy Otis: Yeah. I mean, you're trying to convince people to care about the impact of what they're doing.

[00:53:25] Jordan Harbinger: Right.

[00:53:26] Cindy Otis: And that's always the challenge that I run into in talking with folks about disinformation is like, "So what, who cares? Right? Like I spread something false on social media, like whatever, it's a blip." You have to worry about what you are putting out there and how people maybe are making decisions based on that. That's why most people who work in disinformation, like this is what keeps us up at night is potentially being wrong or putting something that didn't actually attribution of a foreign adversary, their disinformation campaign, and maybe getting that wrong or something. That keeps us up at night because we know that people are trusting us, right? When we do get it wrong or when it's maybe not quite what we thought it was that can have really, really significant impacts on, not just our credibility, but the extent to which people trust professionals and experts in their fields.

[00:54:13] So you're asking people to care about the potential harm that they're doing. And, you know, it sort of gets to the idea that on social media and in the whole sort of like Internet environment, I think we're losing our ability to see people on the other side of the screen as human, and therefore, we don't care as much about the damage that might be done to them because they're just an account, right? They're just an avatar.

[00:54:36] Jordan Harbinger: Not to mention, they're also pulling these fear levers, right? And fear, which spreads like a virus we've sort of talked about this tangentially. It can cause people to act in ways they never normally would. Tell me about that anecdote with these two builders in Mexico and this fake child trafficking thing, these poor guys.

[00:54:53] Cindy Otis: Yeah. So this is actually a rumor that has spread all over the world. People in countries like India and Mexico started getting these messages on WhatsApp and Telegram of videos and pictures and things like that, saying these gangs of violent people were coming through and kidnapping children and murdering them. It really spread fear and panic into these communities. And so you had, you know, people sort of looking over their shoulders at any stranger coming into town, being like, "Ah, is this the child kidnapper, who's going to take my kid and murder them that I have to worry about?" These two men came into town, these builders to get supplies in this village in Mexico. And this rumor had been rampant for a while. And the townspeople thought that they were child traffickers. They grabbed these individuals and they burned them alive. This was actually happening all over India as well, around the same timeframe and Indian police in really remote parts of the country had just strings of murders of people who were just passing through town and the townspeople saw them as strangers, killed them, thinking they were child traffickers.

[00:56:01] I listened once to a talk that a local police chief from India gave about how they ended up working to combat that rumor because they were largely in villages with illiterate populations and they were consuming videos and pictures as evidence. And so they had to find a way to counter this rumor without any text because it was an illiterate population primarily, but they had something like 40 to 50 murders in the course of a month because people believed these rumors.

[00:56:29] So that fear and panic, you can't underestimate the extent to which disinformation that threads in fear and panic can cause people to take action. You know, look, we saw it in Buffalo as well at the shooting, the man who killed all of those people in the Buffalo grocery store, he had spent most of the pandemic consuming, white supremacists, conspiracy theories that promoted fear of people from marginalized communities. And it led him to kill people as a result.

[00:56:56] Jordan Harbinger: To me, this is a classic example of just how, people will think, "Oh, these dumb people couldn't even read. Of course, they're going to believe this." And yet we see people taking shocking — we see our own family members getting radicalized by disinformation. And it's like, we don't even know these people anymore. And it's really hard to bridge that gap and that divide because we see what they post online. We see how they talk and sometimes they take action and it's like, I can't even talk to my cousin anymore because he lives in a different reality entirely.

[00:57:28] Let's talk about cognitive dissonance. Why is it that logic and evidence just completely fail to persuade us a lot of the time?

[00:57:34] Cindy Otis: Well, it goes back to just not liking being wrong. We experience this sort of extreme, both mental and physical discomfort when we're presented with evidence that contradicts our personal views.

[00:57:46] It's actually a fascinating story. What occurred that led to three social scientists coming up with the term cognitive dissonance, where essentially this conspiracy sort of fringe religious group in Illinois believed that the earth was going to be wiped up by flood. And they were going to be lifted up and taken away by aliens called the Guardians. And they prophesied about dates that the Guardians were going to show up to take them away. And every time they passed those dates without being lifted up or the flood happening, they came up with reasons why it didn't happen. "Well, the Guardians don't like metal and some of you are wearing necklaces. So take the necklaces off and next time they'll for sure be here."

[00:58:23] The cognitive dissonance is the process of feeling extreme discomfort when confronted with evidence that proves that we're wrong. And also coming up with sort of additional justifications for why we're still really right at the end of the day.

[00:58:36] Jordan Harbinger: It sorts of shakes hands with confirmation bias, looking for evidence that your beliefs are correct, as opposed to looking at the evidence as a whole. We're just looking to cherry-pick the things that reinforce our existing beliefs.

[00:58:47] How do we become more aware of our bias? Because while I'm not waiting to be picked up off a balcony by extraterrestrials hiding in a ship, that's in a comet somewhere. I have bias. And so does everyone else listening to this. How do we become more aware of these types of bias and then at least make an attempt to counteract it?

[00:59:07] Cindy Otis: I think, you know, of all the digital media literacy tips that I talk about pretty regularly, this is actually the hardest one.

[00:59:13] Jordan Harbinger: Sure.

[00:59:14] Cindy Otis: Most of them are pretty easy. You can check the source, you can check the date of something, that kind of thing. Confronting our own personal biases, I think is actually really hard because it puts us in an uncomfortable position of having to have some authentic and maybe some hard conversations internally about ourselves.

[00:59:29] I recommend always sort of coming up with a list of things that make up your background, things that make up you, right? Your circumstance, where you come from, what you believe in your religion, your political leanings, things like that, and really making an honest list of what are the things that shape my views and opinions. And then you can understand what your triggers are. Like, what are the things that are going to, as a result, make you sort of more inclined to believe them or less inclined to believe them?

[00:59:56] So, for me, it's like, I'm a white woman. I'm a wheelchair user. I lean center-left. I have a national security background. So on national security issues, I tend to lean more on the conservative side. I have worked in tech. I come from the east coast, I'm educated, I have a master's, that sort of thing. So what does this mean for me in terms of how I look at information and what lens I have when I'm consuming that information? How does that influence my opinion? And then from there, I do things like, you know, am I reading widely? Am I reading from a wide body of sources? Do I understand the arguments that other people are making or am I just discounting them? Because I'm like, well, yeah, I don't believe it, so that sort of thing.

[01:00:37] Jordan Harbinger: Yeah, this is good. There's a lot of tactics in the book, some of which I'll put in the show clothes, there's ways to sift through fake news online, there's websites that you can use to check things. I'm going to go through a lot of that in the show close, but thank you so much for coming on the show today.

[01:00:51] I mean, we're both obviously very passionate about disinformation and the damage that's causing and it just sort of feels like I'm holding a dam together with my freaking hands to keep it from cracking. But it's good to know that there's other people who are at least standing next to me pushing on the same wall.

[01:01:09] Cindy Otis: No, I appreciate what you do in your show, Jordan, like it's not me blowing smoke at all. Like the folks that you have had on, the messages that you give to your listeners, the topics that you take on, I think are really important. Again, I go back to, no one person is going to solve this. No one company is going to solve this. No one government is going to solve this. It really is going to take a whole of society effort. And that's a struggle when you can't get people to align on basic things like fact.